Computer Science Education & Didactics

Researchers: Willem Lapage, Natacha Gesquière, Tom Neutens and Francis wyffels

Introduction

AIRO is committed to improving computer science and STEM education. We develop evidence-based tools that facilitate the learning process for both teachers and students. To achieve this goal we perform field research collecting relevant quantitative and qualitative data by interacting with both teachers and students. This data is combined with our experience in computer science to develop tools that meet the educational requirements.

Learners

A large part of our research goes into analyzing how learners learn to program and how the learning context influences the learner’s behavior. To analyze the learning process we need to collect programming data from students who learn to program. We set up a programming environment where the programmer’s interactions and programming code are logged. This gives us the ability to understand how students approach computing tasks. In the Create or Fix experiment we identified differences between creating programs versus fixing incorrect programs as a teaching technique for computing. We saw for instance that debugging incorrect programs generally invoked less interaction with the programming code, but more program executions compared to creating programs from scratch.

Educators

The main goal of our teacher-centered research is to identify what withholds teachers from implementing STEM subjects in their classrooms. Teachers often describe their own shortcomings as the reason for being hesitant towards teaching certain STEM subjects. Identifying what these shortcomings are and finding a way for teachers to overcome them is an important element of our research. Some of our experiments have shown that teachers often lack the necessary content knowledge to be confident enough to teach STEM subjects. One specific skill teachers often lack is programming. Consequently, a lot of our research focuses on facilitating the integration of programming into primary and secondary education. More recently our perspective has been expanded from looking at improving teaching to improving assessment for programming. Our aim is to create a didactical framework teacher can use to effectively assess programming assignments.

Tools

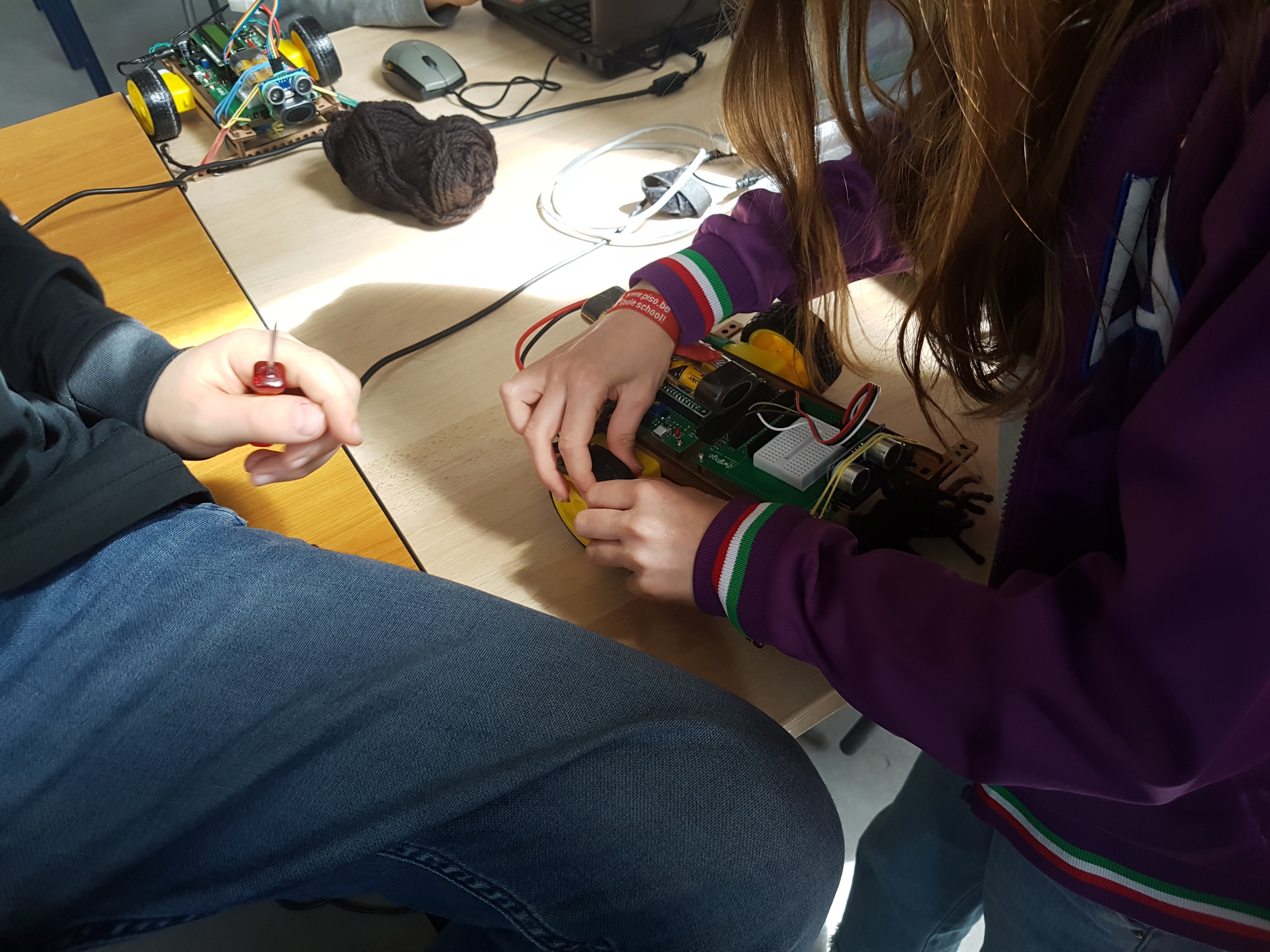

To have complete control over the research data we collect, we design and develop all the necessary tools in-house. Consequently, our tools are made to perfectly fit our experimental designs. These tools include the required hardware and software for teaching physical computing as well as research-based, classroom validated instructional designs. Everything we produce is offered to the teaching community free of charge under a creative comments license. Some examples are the WeGoSTEM and AI at School projects. More information about other projects can be found on the outreach page.

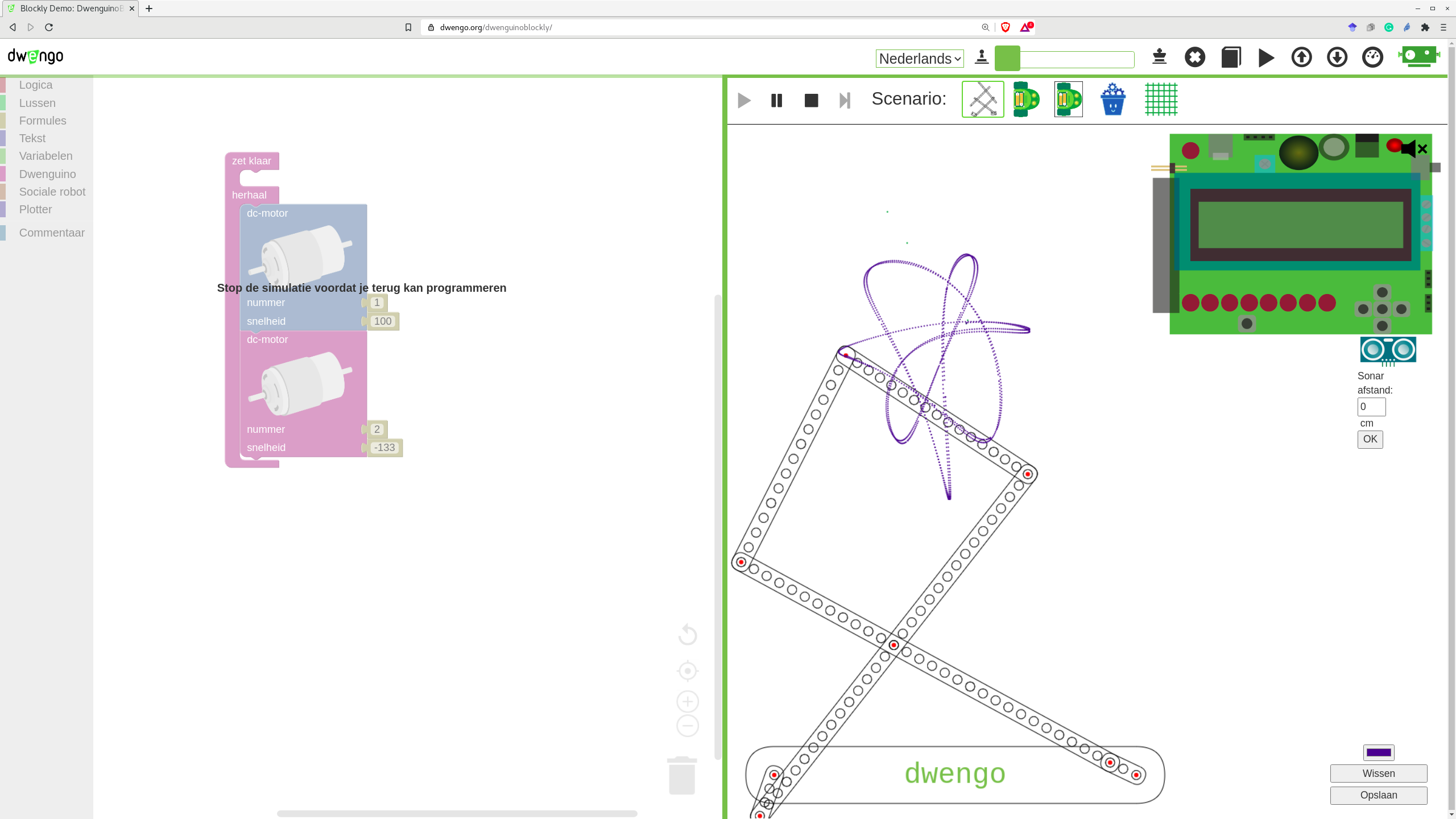

Dwenguino Simulator

Since previous research has shown that teaching programming through physical systems has many pedagogical advantages, we have created a graphical programming environment that allows learners to program a physical system. These physical systems are based on the Dwenguino microcontroller platform. The Dwenguino platform was specifically designed to facilitate the use of physical systems in a classroom and is modular enough to be able to create multiple different physical systems. Consequently, a wide range of physical systems can be controlled using our programming environment. Additionally, the environment includes a simulator which decouples learning programming from learning about the limitations in the physical world simplifying the learning experience.

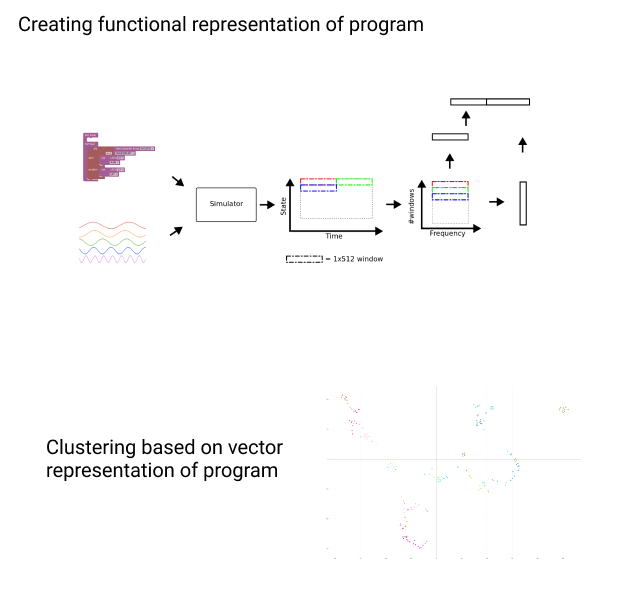

Towards AI driven feedback

Enabling teachers to be more effective requires the right tools. Using our programming environment, we collect data about how learners acquire programming skills. We leverage this data by using machine learning techniques to provide teachers with the information they need to improve the learning efficiency of the students in their classroom. In a first step, we aim to create relevant visualizations for teachers to give them more insight into how their students learn. The second step is to use this information as input for an automated feedback system, further improving personal learning experiences.

Publications

- Unsupervised functional analysis of graphical programs for physical computingIn 2020 International Conference on Computational Science and Computational Intelligence (CSCI) 2021