Social Robots for Multimodal Interactions

About this research line

We investigate how social robots can engage in rich, multimodal interactions, combining speech, vision, gestures and touch, to have robots functioning naturally in real-world environments.

In social robots, buttons and screen interfaces are replaced by verbal and non-verbal communication. All robots in science fiction are social robots; they are all able to understand human actions and engage with people. This is in stark contrast to most robots we see today, which remain separated from us. Industrial robots are kept apart from people, and from the safety of a cage, they weld cars or fill boxes, never being aware of the richness of human activity around them. Research in social robotics aims to change that, but creating social robots is a formidable challenge.

Social interaction is possibly one of the biggest challenges in artificial intelligence and robotics. As social interaction uses all faculties of the human brain, such as memory, language, semantics, and emotion, we need to create artificial equivalents of all these. The latest developments in AI and machine learning are integrated to build robots that understand and change the social world. Our goal is to build and integrate AI into physical robots to create robots that can integrate into our human-inhabited environments.

Next to the technical aspects of building social robots, we are also interested in how we interact with robots. This study of Human-Robot Interaction uses insights and methods from related scientific fields, such as social psychology and design, to uncover how we respond to social robots. What aspects of the design of a robot help us to trust the robot? How persuasive is a robot? Can a robot help you cope with problems?

Designing robots that blend multiple input and output modalities presents significant challenges. Our research focuses on developing algorithms that enable robots to process and generate coordinated responses across different interaction channels. In addition, we use multidimensional measurements (including subjective and physiological measurements) to explore how humans perceived and response to these multimodal cues, ensuring that robots not only communicate effectively but also build trust and rapport with their users

Multimodal Input

Multimodal dialogue systems enable robots to engage in conversations that go beyond speech; for instance, incorporating visual information and user metadata to enhance communication.

Vision language models allow robots to understand and generate responses based on visual cues, such as recognising objects and complex situations without extensive modelling. This enables robots to initiate meaningful interactions, ask follow-up questions or provide relevant information based on the visual context. For example, if a user is wearing a bright red shirt, the robot could comment on it or use it as a reference point in the conversation.

In addition to visual cues, robots can also use user metadata, such as past interactions, preferences, and emotional states, to tailor their responses. Being aware of users’ preferences allows robots to align their behaviour with human values, ethics, and cultural norms. Value-aware robots must recognise and adapt to the moral and social expectations of their users, ensuring their actions are not only functional but also socially appropriate. We are developing frameworks that allow robots to reason and act according to human values, enabling them to make decisions that are sensitive to context and culture.

Multimodal input can improve the contextual understanding of robots in everyday environments.

Gestures and Touch Interactions

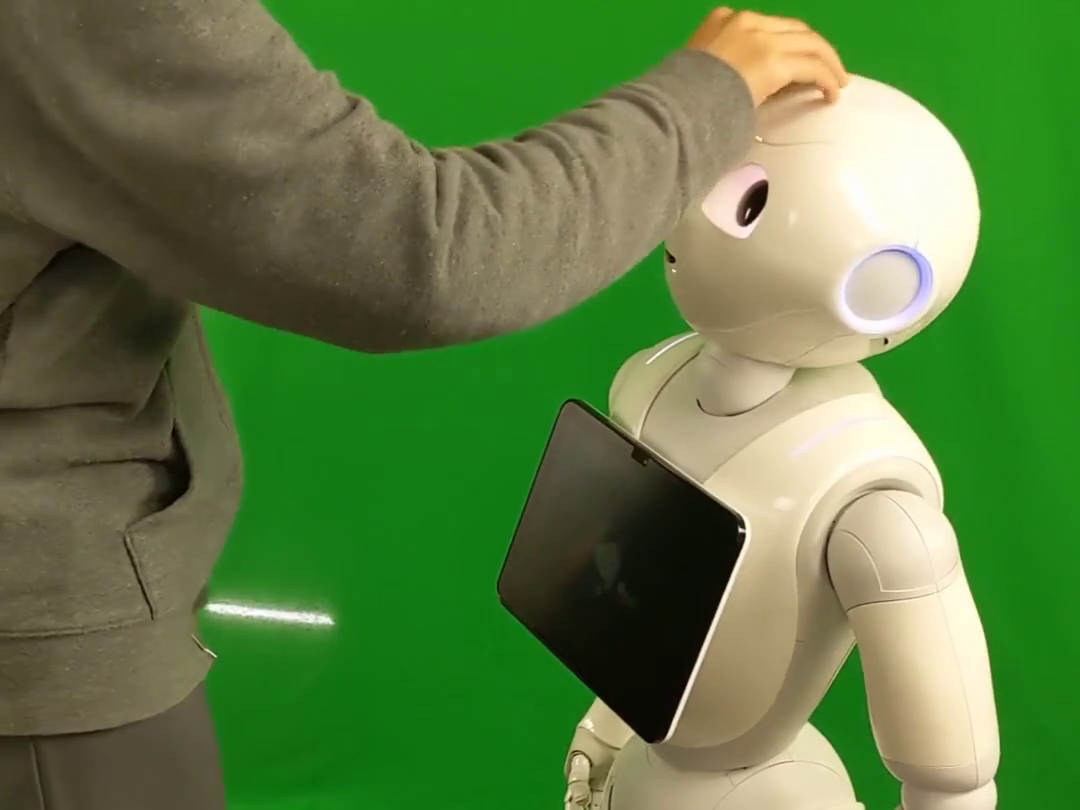

Gesture and touch are powerful forms of nonverbal communication that are often combined with speech to convey emotion, intention, and empathy. For instance, when a robot employs a gentle touch and expressive gestures alongside speech, these cues can enhance the clarity, emotional resonance, and social salience of its communication, thereby attracting greater human attention and engagement. By integrating insights from human–robot interaction, cognitive science, and neuroscience, we seek to develop design principles for socially robots that can communicate attentiveness and empathy in a context-sensitive manner. We aim to develop human-centered social robots that can use these non-verbal cues to enhance emotional connection in human-robot interaction.

We explore different touch interactions with the Pepper robot.

Long-Term Interactions in Care Homes

Social robots have the potential to revolutionise long-term care by providing consistent, personalised support to people touched by loneliness and social isolation, such as residents in care homes. These robots can act as companions, offering emotional support, engaging in conversations, and assisting with daily activities. Over time, they can build meaningful relationships with residents, helping to combat loneliness and improve overall well-being.

Pepper robot in a care home.